I hate to break it to you, but your fancy stereo is not actually hi-fi. That extra money you’re paying for your phone does not mean it’s using a true 5G network. And that “AI-powered” toothbrush of yours is just a toothbrush with an app.

They’re all marketing terms.

There is no meaningful universal standard for “Hi-Fi.” A tin can with a wire sticking out of it could be hi-fi if Sony slapped a “Hi-Fi” sticker on the packaging. “5G” is often a messy 4G LTE hybrid while that little symbol in the corner makes you feel like you’re tearing down the AT&T Autobahn.

And “AI”? Same deal. At this point, anything with an algorithm is getting shoved under the giant inflatable tent labeled ARTIFICIAL INTELLIGENCE.

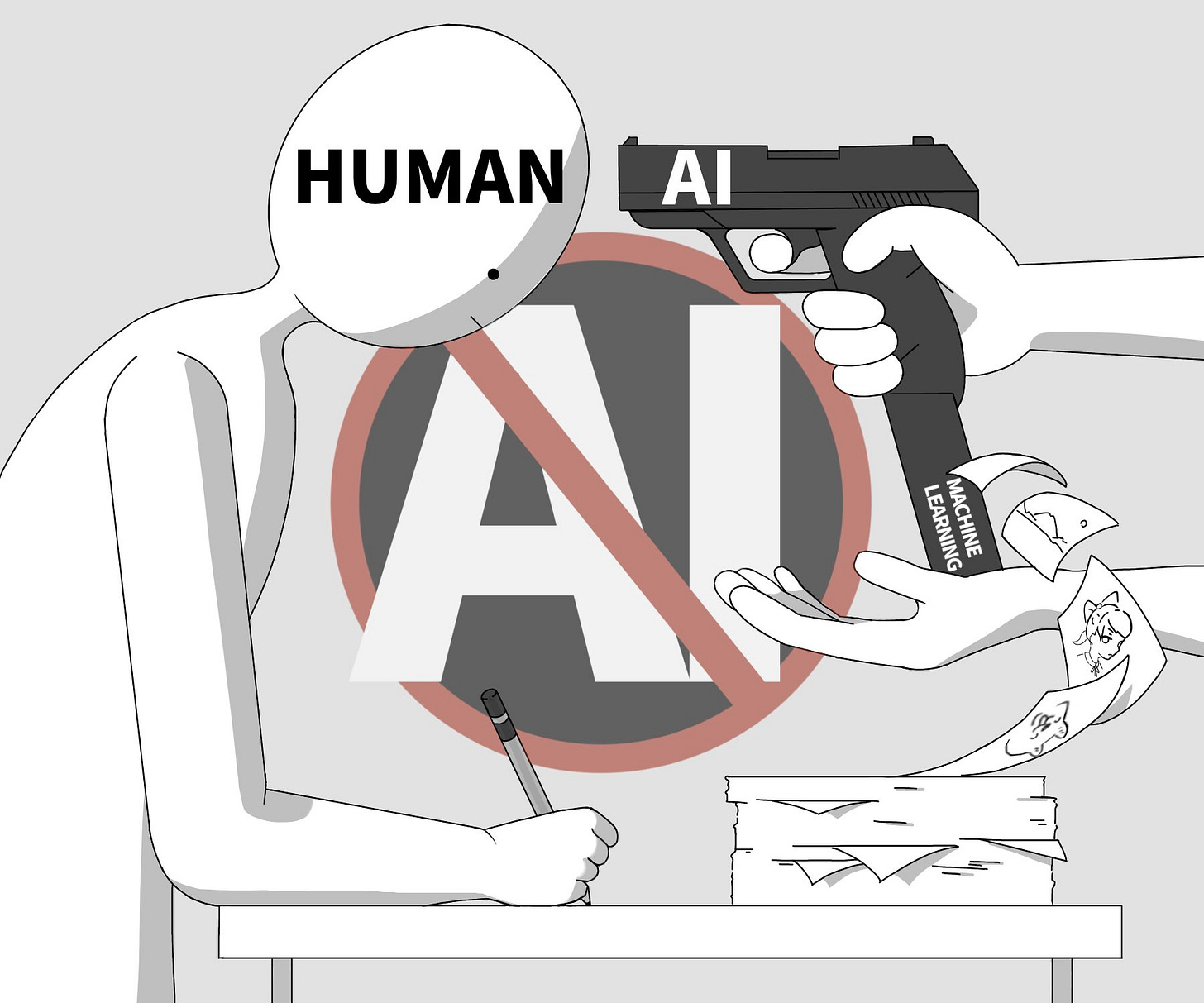

So when people say, “AI is bad,” it might be worth pausing for half a second and asking: what exactly are we talking about? Because if we don’t define it, we’re going to get dismissed as irrational Luddites frothing at the mouth every time a computer does something slightly more complicated than a toaster. Worse, we may actually become those Luddites.

You cannot just yell “AI bad” and dismiss someone’s life’s work because you suspect they might have touched ChatGPT. That is not a principled stance. It’s a fear response. For a lot of people, it feels safer to make a baseless accusation than risk being caught unknowingly enjoying something AI-generated.

And I do mean baseless. I can confidently say 100% of the people who have accused me of using AI have read 0% of my work. Not only do they not read a single word, they vigorously try to keep other people from reading it too, which is a neat trick for anyone pretending they’re defending human creativity.

I don’t think AI is inherently bad. I think AI is a tool, and like most tools, the real problem is the asshole holding it. Grok isn’t deepfaking nudes because it’s just that edgy. Some creep told it to. Nobody loses their job to AI because the computer got ambitious. They lose their job because corporations are functional sociopaths that view a worker’s livelihood as an inconvenience.

So how do we gauge AI? It’s easy.

A Very Scientific System I Made Up Five Minutes Ago

I think it helps to divide AI into three broad categories: Generative AI, Assistive AI, and Agentic AI. Those categories can then be sorted into three qualities: The Good, The Bad, and The Grey.

Generally, if a human is still doing the work, still getting paid, and merely using AI to make life easier, that’s good. If AI is replacing jobs, hollowing out creative work, or making everyone’s life worse, that’s bad. And since the only thing in life that truly operates in binary is a computer, there’s also a whole realm where AI is neither entirely good nor entirely bad. That’s the murky grey.

Let’s get into it.

1. Generative AI

This is what most people mean when they say “AI is bad.” It’s the flashy stuff that generates text, images, video, music, fake voices, fake faces, fake articles, and fake girlfriends.

The Good

There’s nothing wrong with using ChatGPT to answer a question. Asking an AI is often just easier than digging through ten pages of SEO-goosed Google results, and Google did that to itself by spending years turning search into a swamp of ads, affiliate links, and listicles. It can live with the consequences.

People complain that LLMs were trained on Reddit replies, but there’s a reason for that. We’ve been doing the exact same thing for years, cribbing answers off Reddit. Ask yourself how many times you’ve added “Reddit” to a Google search just to get a quick, useful answer.

There’s also nothing wrong with having an LLM help with background research, summarize a dense topic, or speed up the grunt work. It can save real time, and that alone should not invalidate the human work built on top of it.

The Bad

At the same time, generative AI was born from Silicon Valley scraping up every byte of human creativity it could get its hands on, training models on it, then flooding every platform with synthetic garbage until genuine human work became harder to find, harder to trust, and easier to ignore.

Most importantly, it threatens to make the actual humans who create things irrelevant. Which is fantastic news if your dream society is one giant server farm where everyone is simultaneously a DoorDash driver and too broke to afford to use DoorDash.

And they stole my fucking em dashes. Make this make sense:

For centuries, scribes used long strokes in their writing.

The em dash emerged as a standard in 15th-century printing to represent that stroke, named for its width being roughly equal to that of a capital M.

Typewriters came along, and there’s a small problem: They had no dedicated em dash key. So did authors stop using em dashes? No. They typed multiple hyphens instead and trusted typesetters to fix it later.

Computers become a thing. Again, there is no em dash button, but authors don’t stop using em dashes. They just continue typing out three hyphens.

In 1991, Unicode adds a proper Em dash (U+2014). Authors continue to use em dashes, only now they’re holding down Alt, typing 2014, and scoffing at the simps still using three hyphens.

AI becomes a thing. How does it become a thing? By gobbling up the written word of every single author since Gutenberg to train their Large Language Models to write like authors.

Now, the LLMs trained to write like an author are using Em dashes just like an author and guess what happens? Do they accuse the AI of plagiarizing authors?

NO! Everyone accuses the authors of plagiarising AI because they’re using the same fucking punctuation they have always used. Why? Because no human uses em dashes. There isn’t even a way to type an em dash on a computer. Duh.

The Grey

This is where people completely lose their minds.

If someone writes and performs a song, then uses AI to add backing vocals and bass, is that an AI-generated song?

I’d say no. But also yes. But mostly no—I don’t know. Was it good?

That’s the part nobody wants to ask. The moment AI enters the frame, people stop evaluating the result and start treating the process like a failed drug test.

Humans are creative, but only a handful are true Renaissance types who can do every part of a project well. AI is tempting because it can help shoulder the load. So the real question becomes: who actually made the thing? Was the human driving the work, or did they just wander in at the end and slap their name on the group project while the robot did all the labor?

A lot of the outrage here gets weirdly absolutist, fast. People act like if AI touched even a single pixel, the entire work is invalidated. They’d have artists and writers pissing in a cup before every submission just to make sure they’re not doping with ChatGPT.

But creative work has always had grey areas. Photobashing. Remixing. Collage. Digital cleanup. Sampling. Editing. If someone used AI in a limited, specific way inside a much larger human-made process, then you have to actually look at the process.

I know. Awful burden.

You can’t just stamp FAKE on everything and call it moral clarity.

2. Assistive AI

This is the version of AI I’m least worried about because it fits pretty neatly into the normal history of technological advancement. Assistive AI is not doing the whole job for you. It’s helping with the annoying, repetitive, monotonous parts so a human can focus on the parts that actually require judgment, taste, skill, or creativity.

In other words, it’s speeding up one specific part of a job, not replacing the entire thing.

The Good

Netflix using AI lip-sync tech to better match dubbed dialogue to actors’ mouth movements is a good example. The actors still act. The voice actors still dub. Nobody stops being an artist. The final product just gets polished in a way that makes the language swap less distracting.

Grammarly is another one. If it catches your typo in ten seconds and saves you from publishing a “pubic statement,” that is not the fall of civilization. That is mercy.

The Bad

The bad side is that even assistive tools can make some jobs less necessary over time, but that’s also just how technology has always worked.

There was a time when chair-making was a specialized craft. Now we buy furniture from IKEA, spend four hours assembling Swedish particle board with an Allen wrench, and call it convenience. Humans have been mechanizing skilled labor for centuries. We love doing that. Turning skilled careers into cheaper, thinner, more disposable versions of themselves has always been one of technology’s nastier hobbies.

The Grey

That doesn’t mean every change is bad, or that the tool itself is to blame.

Rotoscoping is a good example. It’s an art form, a real skill, and part of the backbone of VFX work. But it’s also increasingly being replaced or accelerated by AI-powered tools in programs like After Effects.

Is that bad? Maybe. Or maybe it makes artists ten times more productive and frees them up to focus on higher-level work. The answer depends on whether the worker is still valued, still paid, and still treated like a person rather than a disposable cog in someone else’s quarterly report.

That’s the real issue, over and over again. It’s not the tool. It’s the labor relationship around the tool.

Don’t blame the plantation’s bullshit on the cotton gin.

Agentic AI

This is the one that should actually make people nervous.

Agentic AI is not just generating content or assisting with tasks. It is meant to perform complex human jobs for you. It is the wet dream of every executive who has ever looked at payroll and thought, “What if all these people didn’t need food?”

The Good

It’s one step closer to a real-life Asimov robot.

That’s it. That is honestly the only upside I can come up with that doesn’t immediately veer into dystopian nonsense.

The Bad

In a business environment, there is no version of agentic AI that does not threaten jobs. That is the point of it. Nobody is funding this stuff out of some noble desire to free humanity from email fatigue. They want labor replacement.

You do not buy Rosie the Robot and also keep paying the housekeeper.

Agentic AI is not just another productivity tool. It is a direct attempt to automate white-collar and middle-class labor at scale, and if it succeeds, it threatens to hollow out one of the few remaining economic buffers keeping this country from turning into a techno-fascist hellscape.

The Grey

Maybe, if we’re lucky, we end up with C-3PO.

More realistically, in the short term, we end up with a bunch of half-functional fake employees making everything worse while CEOs insist this is innovation because one of the bots successfully scheduled a Zoom call.

In the long term, we get a truly American version of the future: fewer jobs, worse service, more surveillance, and a press release about how excited everyone should be.

So, basically, Soylent Green with monthly subscription tiers.

Conclusion

“AI is bad” is an understandable instinct. What people are reacting to is real. The theft, the slop, the labor replacement, the cultural erosion, the environmental cost, the collapse of trust online. None of that is imaginary. It’s happening right now.

But “AI is bad” is too blunt to be useful if you don’t define it.

It lumps together job-killing automation, harmless spellcheck, research assistants, image generation, lip-sync cleanup, fake-news factories, and your phone suggesting a generic reply to a boring work email as if they’re all the same thing.

They are not.

The smarter position is not “AI good” or “AI bad.” It’s asking who is using the AI, what kind of AI it is, and at whose expense.

Everything else is bumper-sticker thinking.